As we navigate the tumultuous geopolitical landscape of March 2026, the nature of warfare has fundamentally shifted. While the devastating imagery coming out of protracted conflicts in Ukraine, Gaza. And Sudan shows familiar scenes of rubble and displacement, the mechanisms driving this destruction have evolved rapidly. We are no longer merely witnessing mechanized warfare; we are deep into the era of algorithmic combat. The integration of Artificial Intelligence (AI) in warfare and the ubiquity of drone technology have accelerated the tempo of destruction. Creating an unprecedented crisis for civilian populations and Erosion of Humanitarian Law.

While military strategists tout the precision of these technologies, the reality on the ground tells a different story—one of systemic infrastructure collapse. Massive civilian displacement, and a terrifying erosion of International Humanitarian Law (IHL). The seductive promise of a “cleaner,” high-tech war is proving to be a dangerous illusion. Instead, the push for technological supremacy and “victory at any cost” is dismantling the very guardrails designed to protect humanity during conflict.

The Algorithmic Battlefield and the Death of Distinction

The most profound shift in modern combat is the delegation of life-and-death decisions to algorithms. AI in warfare is no longer sci-fi; it is the operational standard. From the steppes of Eastern Europe to the dense urban centers of the Middle East. Militaries are utilizing machine learning to process vast amounts of surveillance data to identify targets faster than any human analyst could.

The humanitarian crisis stems from the inherent limitations of these systems regarding the core tenet of IHL: the principle of distinction. This principle mandates that warring parties distinguish at all times between combatants and civilians.

While AI can identify patterns, it lacks the nuanced human judgment necessary in complex. Fluid environments like Gaza or urban Sudanese cities. An algorithm may flag an individual based on metadata—location, associations, phone usage—rather than confirmed hostile intent. When the speed of algorithmic targeting outpaces meaningful human verification, the “fog of war” becomes digitized. The result is a broadening of what is considered a legitimate target, inevitably leading to higher civilian casualties that are coldly categorized as “algorithmic errors” rather than violations of law.

The Drone Age: Persistent Terror and Sanitized Violence

Parallel to the rise of AI is the absolute saturation of the battlefield with unmanned aerial systems. Drone warfare impact goes beyond the immediate destruction of a missile strike; it fundamentally alters the psychology of daily life for populations under siege.

In Ukraine, the constant buzz of loitering munitions has made every open space a potential kill zone. Paralyzing civilian movement and the delivery of aid. In other theaters, cheap, weaponized commercial drones have democratized airpower, allowing non-state actors to inflict massive damage on infrastructure, further destabilizing fragile regions.

Critically, drone warfare risks “sanitizing” violence for the operators. Engaging targets through a screen thousands of miles away can psychologically distance the attacker from the human consequences of the strike. This detachment can lead to a lowering of the threshold for using lethal force. Threatening the IHL principle of proportionality. Which forbids attacks where civilian harm is excessive in relation to the concrete military advantage gained. When the risk to friendly forces is near zero, the incentive to exercise restraint diminishes, often at the expense of the civilian population living under the drones’ gaze.

Case Studies in 2026: A Global Erosion of Humanitarian Law

The theoretical risks of military tech are now the grim reality of March 2026.

In Gaza, the convergence of dense population and advanced AI targeting systems has resulted in unprecedented levels of infrastructure collapse. The reliance on AI to generate target lists at industrial speeds has meant that residential blocks, hospitals, and water sanitation facilities are often struck because algorithms identify proximity to combatants as sufficient grounds for attack. The long-term habitability of the strip is now in question.

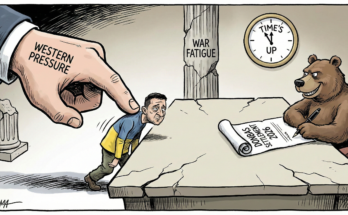

In Ukraine, the war has evolved into a high-tech duel of autonomous systems. The widespread use of electronic warfare to jam drones has led to the development of fully autonomous lethal weapons that can complete a kill chain without human communication—a terrifying escalation that bypasses traditional command responsibility.

Simultaneously, in Sudan, the proliferation of inexpensive drone technology among various factions has made humanitarian access nearly impossible. Aid convoys are easily tracked and targeted, weaponizing starvation and exacerbating one of the world’s worst displacement crises.

The Erosion of Humanitarian Law

The Geneva Conventions were written for a world of human soldiers, clear front lines, and kinetic weapons. They are woefully inadequate for an era of data-driven warfare and autonomous swarms.

The speed at which technology is being deployed on the battlefield is vastly outpaced by diplomatic efforts to regulate it. We are witnessing a de facto rewriting of the laws of war based on technological capability rather than ethical consensus.

The most significant casualty is accountability. When an autonomous drone swarm, operating on complex AI logic, strikes a refugee camp, who is responsible? The programmer? The commander who deployed it? The manufacturer? This “accountability void” encourages recklessness. If no individual can be reliably held responsible for a war crime committed by a machine, the deterrent power of international law evaporates.

Furthermore, the precision of these weapons has paradoxically led to the normalization of targeting “dual-use” infrastructure. So, power grids, data centers, and transport hubs are destroyed under the guise of degrading military capabilities. But the immediate and lasting impact is felt most severely by civilians. Plunging millions into darkness and cutting them off from essential services.

Conclusion: Reclaiming Humanity in the Age of Machines

As we survey the state of global conflict in early 2026, the trajectory is alarming. The unseen toll of AI and drone warfare is not just measured in the immediate body count, but in the systematic dismantling of the rules that govern civilized behavior during conflict.

Technology is not morally neutral; its application in warfare is a choice. The current path prioritizes speed, lethality, and force protection over civilian life and legal obligation. To reverse this erosion of humanitarian law, the international community must move beyond slow-moving multilateral discussions and establish firm, enforceable red lines regarding human control over lethal force.

We must insist that the principles of distinction and proportionality are not outdated concepts to be “disrupted” by technology, but non-negotiable foundations of humanity. If we fail to regulate these tools now, we risk entering a future where the laws of war are dictated solely by an algorithm’s cold calculus, and the concept of a “protected civilian” becomes a relic of the past.